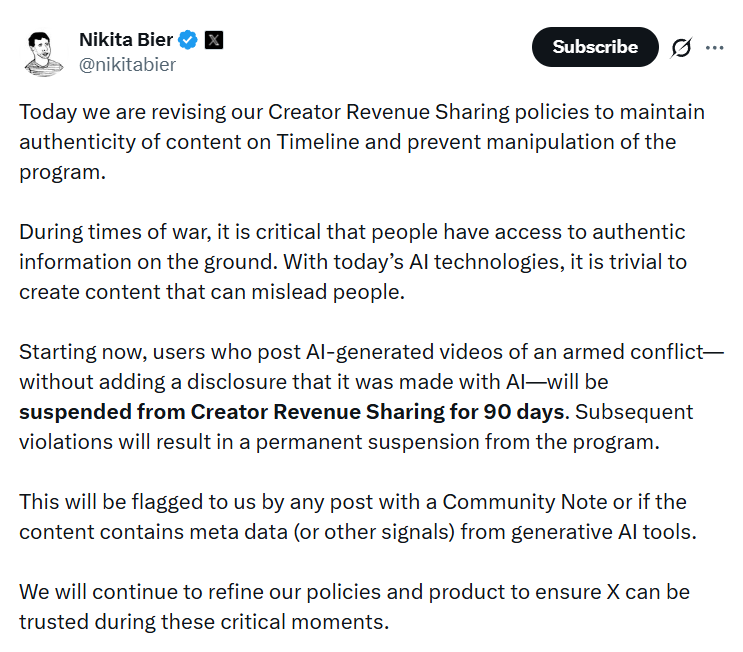

Social network X announced the introduction of temporary monetization restrictions for users who publish AI-generated videos of armed conflicts without proper labeling. This was reported by product director of the platform’s algorithmic feed Nikita Bier.

Under the new rules, authors distributing unmarked AI-generated military content will be excluded from the revenue-sharing program for 90 days. In case of repeated violation, a permanent ban from the monetization system is provided. At the same time, the platform does not plan to automatically delete such publications or block violators’ accounts. The measures concern financial sanctions rather than classical content moderation.

Bier emphasized that during military conflicts, access to verified and reliable information becomes critically important. Modern generative AI tools can create visually convincing videos that are difficult to distinguish from real footage. This significantly increases the risk of disinformation, panic, and public opinion manipulation.

To detect fakes, the platform will use two key mechanisms. The first is the crowdsourced fact-checking system Community Notes, where users can add context and explanations to disputed posts. The second is AI content detection algorithms that analyze file metadata and characteristic signs of machine generation.

The policy update occurred amid a sharp increase in AI-generated videos related to recent geopolitical crises. Viral clips of simulated combat created using neural networks were widely distributed, accumulating millions of views and forming a distorted picture of events.

The fake content problem existed on the platform earlier as well. Before the mass adoption of generative models, users frequently uploaded gameplay footage, particularly from the military simulator Arma 3, presenting it as real combat footage. However, the development of accessible AI generators has dramatically simplified the creation of realistic forgeries and lowered the barrier to large-scale dissemination.

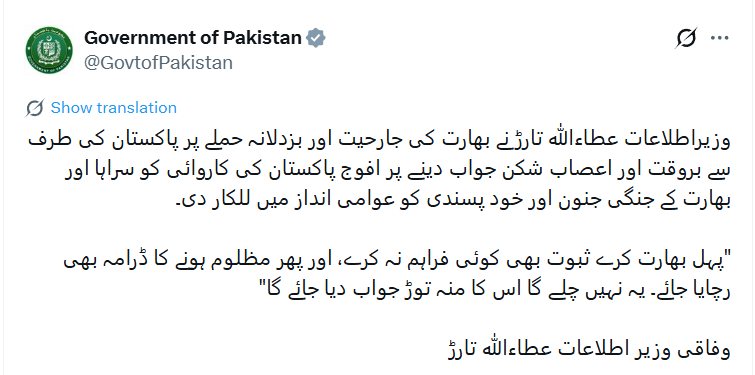

The situation is further complicated by X’s monetization model. The platform pays premium account authors based on audience engagement — views, comments, and interactions. This system objectively encourages the creation of highly viral content. In a competitive attention economy, this pushes some users to publish provocative or shocking materials, including deepfakes, without proper fact verification.

In practice, X’s decision relies on economic pressure: revenue loss should act as a deterrent for distributors of unmarked AI content. However, critics point out that without removing the posts themselves, misinformation will continue circulating in the feed even if the author temporarily loses access to payments.

Thus, the platform partially transfers responsibility for detecting fakes to the community and automated algorithms, limiting itself to financial sanctions. This raises a broader question about balancing freedom of publication, platform commercial interests, and the need for rapid disinformation control during periods of international tension.

All content provided on this website (https://wildinwest.com/) -including attachments, links, or referenced materials — is for informative and entertainment purposes only and should not be considered as financial advice. Third-party materials remain the property of their respective owners.